How AI is affecting quality of factual information

- According to NewsGuard, more than 2,089 AI-generated news sites are currently operating, publishing content in 16 languages.

- In August 2025, leading AI chatbots relayed false claims in 35% of cases, compared to 18% the previous year.

- According to the Entrust Identity Fraud Report's 2025 projection, a deepfake attack will occur every five minutes by 2024.

- Furthermore, social media personalization algorithms contribute to the fragmentation of the public sphere, creating echo chambers.

- It is necessary to evolve human countermeasures, such as moderation and media literacy, to adapt to this phenomenon.

A few years ago, creating a convincing fake video required considerable resources and advanced technical expertise. Today, all it takes is a few dollars and a couple of minutes. This democratisation of information manipulation through generative artificial intelligence poses new challenges for information verification and trust in the media. Recent data illustrates the scale of the phenomenon. According to NewsGuard, more than 2,089 AI-generated news sites are currently operating with little or no human oversight, publishing content in 16 languages, including French, English, Arabic and Chinese. This represents a 1,150% increase since April 2023.

“These social media platforms play a dual role,” explains Professor Thierry Warin, an analyst of economic dynamics in the era of big data and a specialist in information issues in the digital age. “On the one hand, they democratise speech. On the other, they can become a vehicle for spreading fake news on a large scale.” AI tools themselves sometimes contribute to this phenomenon. A study by NewsGuard shows that in August 2025, the leading AI chatbots relayed false claims in 35% of cases, compared to 18% the previous year. Perplexity went from a 100% false information refutation rate in 2024 to a 46.67% error rate in 2025. ChatGPT and Meta have an error rate of 40%.

Deepfakes represent a notable development in the field of content manipulation. According to Entrust’s 2025 Identity Fraud Report, a deepfake attack occurs every five minutes in 2024. Digital document forgeries have increased by 244% compared to 2023, while overall digital fraud has grown by 1,600% since 2021. “The Centre for Security and Emerging Technology estimates that a basic deepfake can be produced for a few dollars and in less than ten minutes,” notes Thierry Warin. “High-quality deepfakes, on the other hand, can cost between $300 and $20,000 per minute.”

Electoral interference and information manipulation

The year 2024, marked by a large number of elections around the world, saw the emergence of sophisticated disinformation campaigns. The Doppelgänger campaign, orchestrated by pro-Russian actors in the run-up to the 2024 European elections, is a notable example. It combined seven domains impersonating recognised media outlets, 47 inauthentic websites and 657 articles amplified by thousands of automated accounts. The ‘Portal Kombat’ network (also known as ‘Pravda’) illustrates a systematic approach to information dissemination. According to VIGINUM, this Moscow-based network published 3.6 million articles in 2024 on global online platforms. With 150 domain names in 46 languages, it publishes an average of 20,273 articles every 48 hours.

NewsGuard tested ten of the most popular generative AI models: in 33% of cases, these models repeated claims disseminated by the Pravda network. “This content influences artificial intelligence systems that rely on this data to generate their responses,” the report states. This technique, known as ‘LLM grooming,’ involves saturating search results with biased data to influence AI responses. “Many recent elections have been marred by disinformation campaigns,” Thierry Warin points out. “During the 2016 US presidential election, the United States responded to Russian interference by expelling 35 diplomats. With generative AI, the scale of the phenomenon has changed.”

The role of personalisation algorithms

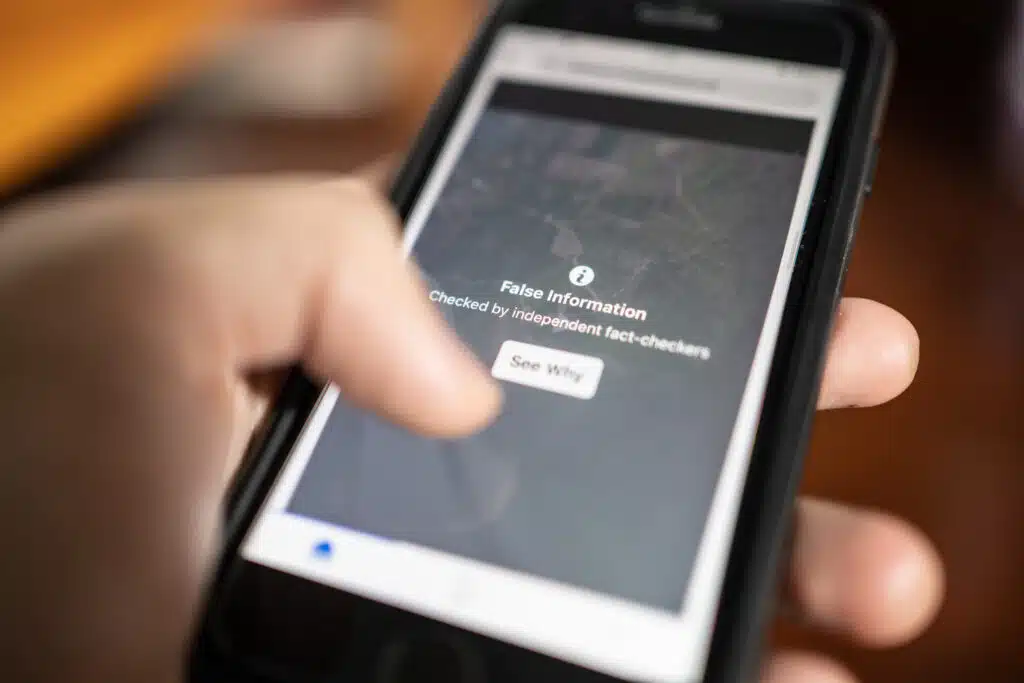

Beyond the creation of fake content, social media personalisation algorithms contribute to the fragmentation of the public sphere. “These systems tend to offer internet users content that matches their preferences,” explains Professor Warin. “This can create what are known as echo chambers.” Studies show that on Facebook, only about 15% of interactions involve exposure to divergent opinions. The content that generates the most interactions is often amplified by algorithms, which can reinforce ideological divides.

In response to these developments, several initiatives have been put in place. Finland and Sweden score highest in media literacy, with 74 and 71 points respectively on the European Media Literacy Index 2023. The European Commission has adopted the 2022 Strengthened Code of Practice on Disinformation to improve platform transparency. In Canada, the Communications Security Establishment published its 2023 report Cyber Threats to Canada’s Democratic Process – 2023 Update, which analyses the use of generative AI in contexts of information interference.

“Traditional countermeasures – human moderation, fact-checking, media literacy – must evolve to adapt to the scale of the phenomenon,” observes Thierry Warin. “Technological solutions, such as synthetic content detectors and digital watermarks, are currently being developed.”

Evolution of the information ecosystem

The year 2025 marks a significant change. The non-response rate of AIs to sensitive questions has fallen to 0%, compared to 31% in 2024. On the other hand, their propensity to repeat false information has increased. Models now prioritise responsiveness, which can make them more vulnerable to unverified content online.

“The adage that ‘information is power’, attributed to Cardinal Richelieu, remains relevant,” concludes Professor Warin. “From printing to television, each media revolution has redistributed the power of information. With generative AI, we are witnessing a major transformation of this ecosystem.” The question now is how to adapt the mechanisms of verification and trust in information to this new technological reality. Ongoing initiatives, whether technological, regulatory or educational, aim to address this challenge.