How is generative AI changing modern education?

- Released by OpenAI in 2023, GPT-4 achieved performance levels comparable to those of postgraduate students in logic, mathematics and academic writing exercises.

- Language models are changing the way students use their reasoning and analytical skills, which can lead to a weakening of their brain plasticity and neural network densification.

- When assisted by AI, knowledge acquisition can be weakened as soon as it systematically replaces intellectual effort: written work, independent work and assessment methods.

- Many questions are being raised about the balance between algorithmic assistance and human cognitive effort, particularly given the rapid evolution of AI models.

- Frameworks are beginning to emerge in which AI is neither absent nor central but integrated in a differentiated manner according to the cognitive objectives pursued.

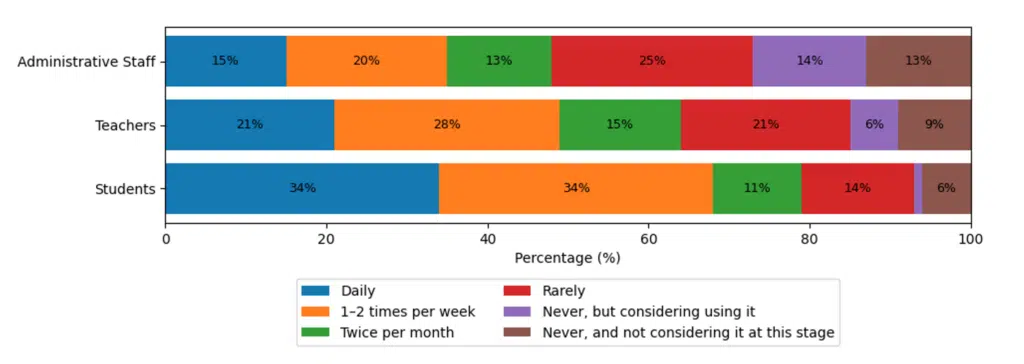

The emergence of generative artificial intelligence in education systems marks a technological turning point. Unlike the e‑learning platforms and teaching aids of the 2000s and 2010s, large language models now produce structured reasoning, synthesise complex corpora and interact with learners in an adaptive manner. GPT‑4, released by OpenAI in 2023, has achieved performance levels comparable to those of graduate students on logic, mathematics and academic writing exercises1. Other architectures such as Anthropic’s Claude and Meta’s LLaMA confirm this dynamic while exploring strategies for governing generative outputs and reducing bias2. These advances offer new opportunities to personalise learning paths, strengthen individualised tutoring and broaden access to educational resources. However, they raise questions about frequency of use (see Figure 1) and the cognitive conditions of AI-assisted learning, as certain aspects of knowledge acquisition may be undermined when this technology systematically replaces intellectual effort3.

These issues directly affect key aspects of higher education, such as written work, independent study and assessment methods. Furthermore, these changes call for an approach that combines academic research and observation of teaching practices.

Cognitive effort put to the test by generative models

Large language models are changing the way students use their reasoning and analytical skills. GPT‑4, for example, performs at a level comparable to that of graduate students on logic, mathematics and text synthesis exercises5. This raises questions about the balance between algorithmic assistance and human cognitive effort. “The problem in education arises when students systematically use AI to write a presentation, thesis, dissertation or even to learn a concept,” says Michel Barabel, a lecturer at Université Paris-Est whose work focuses on organisational transformation, particularly skills management, professional training and learning cultures. Systematic outsourcing can hinder the development of essential cognitive abilities. “Research shows that in this case, the cost of cognitive effort increases rapidly and doing things on your own becomes increasingly difficult.”

The theoretical frameworks of cognitive psychology shed light on this phenomenon. Daniel Kahneman’s distinctions between the Type 1 brain, which is analytical and lazy, and the Type 2 brain, which is creative and engaged in solving new situations, serve as a reference point. Barabel observes that “the great promise of AI is precisely to outsource simple, repetitive Type 1 brain tasks in order to free up time for Type 2 activities.” Its repeated use in fundamental Type 1 tasks can thus limit the densification of the neural networks necessary for creativity and critical thinking.

A comparison with other uses of technology illustrates this risk. “This dependence raises a central question – that of a gradual impoverishment of cognitive abilities,” explains Barabel, referring to examples observed in other contexts, such as London taxi drivers’ navigation becoming dependent on GPS. Neuroscience research shows that learning based on active cognitive engagement promotes brain plasticity and the densification of neural networks, while a sustained reduction in analytical effort limits these mechanisms and weakens the mobilisation of skills in complex situations6. “However, we know that it is very difficult to bring out genuine creativity without this foundation of prior cognitive effort,” adds the expert.

This reflection on cognitive effort highlights that the effects of generative AI depend less on technology than on how it is used. Thoughtful and regulated use can support creativity and productivity, while passive use can compromise the development of essential intellectual skills.

Educational resources undergoing gradual restructuring

The rapid spread of generative AI tools has brought to light uses that are already widespread among students, often outside any institutional framework. Several studies show that these tools are being used to write essays, structure dissertations and prepare assessments, without teaching methods having been designed to take this into account7. This situation has led some institutions to rethink not only regulation, but the very nature of the activities offered.

According to Michel Barabel, “the issue cannot be reduced to a simple opposition between authorisation and prohibition. The problem should not be framed in these terms.” He states that AI combines strong educational potential with real limitations. In the systems he observes, the challenge is to distinguish between different types of activities depending on the role assigned to the machine. “Some activities can be entrusted entirely to AI without any particular regulation,” particularly when it comes to procedures or methodological assistance, while others must remain exclusively human. “The use of AI is prohibited in order to preserve the educational relationship, type 1 brain work, creativity and critical thinking.”

This differentiation is reflected in real terms by transparency measures. “At Sciences Po and IAE Paris-Est, students must explain their use of AI in a dedicated appendix. We authorise its use, but we require an AI appendix in all work,” explains Barabel. This requirement shifts the focus of assessment from the result alone to the intellectual process involved. “For us, the new form of plagiarism is not using AI, but using it without declaring it,” he adds.

Assessment methods have also been adjusted to take these practices into account. Barabel observes that “a presentation due the following week is now very likely to be produced 60, 70, or even 90 per cent with the help of AI.” In this context, “whereas written work used to carry more weight, oral work now predominates in order to assess students’ comprehension, understanding of concepts and ability to defend their reasoning.”

Between delegation, assistance and augmentation, educational applications are structured around a principle of articulation. “There are situations where AI assists the student,” notes Barabel, while in other configurations, “the student produces a first draft and AI helps them improve it through questioning and suggestions.” These experiments are gradually shaping a framework where this technology is neither absent nor central but integrated in a differentiated manner according to the cognitive objectives pursued.

Between promises of improvement and risks of fragmentation

The current limitations of generative AI in education have less to do with its technical performance than with the systemic effects it has on learning trajectories. The cognitive benefits observed depend heavily on the conditions of use, with sometimes contradictory results depending on the experimental protocols. Michel Barabel therefore urges caution in interpreting the initial data available. “Some studies, notably from MIT, have shown a rapid decline in certain cognitive abilities, but on very small samples and with questionable protocols,” he points out, while noting that other studies highlight positive effects on certain skills.

This heterogeneity of results raises a central question: the intensity and, above all, the nature of use. “It is not certain that there is a universal threshold,” says Barabel, emphasising that “the problem lies more in the organisation of activities than in the volume of interaction with AI.” He proposes a theoretical balance in which “100% human should represent at least 20% of activities” in order to preserve the fundamental cognitive functions related to effort, independent thinking and judgement building.

This reflection on cognitive effort underscores that the effects of generative AI depend less on the technology itself than on the ways in which it is used

Beyond individual effects, researchers are concerned about inequality dynamics that could be amplified by intelligent systems. Differences in access to the most powerful versions of the models, often subject to paid subscriptions, are a primary factor in differentiation. Barabel highlights the fact that “families’ economic capital could determine access to more powerful AI used in a private setting,” creating a cumulative advantage for certain students. Added to this are institutional disparities. “Some institutions have the financial and educational resources to deploy AI, train teachers and implement ethical charters. Others do not.”

These material differences are compounded by cultural and cognitive inequalities. “The major risk is therefore an increase in the gap between students,” points out Barabel, distinguishing between those who use AI strategically, those who use it mechanically, and those who reinvest the time freed up in activities with high cognitive value. Research in the sociology of education shows that these mechanisms of differentiated appropriation play a decisive role in the reproduction or transformation of school hierarchies8.

Finally, the rapid pace of technological change poses a structural challenge for educational institutions. “What we say today may be called into question in a few months’ time by a new generation of AI,” says Barabel. This technological instability raises questions about the ability of education systems to combine innovation, equity and the development of fundamental human skills, in a context where the production and transmission of knowledge are themselves undergoing profound change.