Artificial intelligence (AI) is disrupting the world as we know it. It is permeating every part of our lives, with more or less desirable and ambitious goals. Inevitably, AI and human intelligence (HI) are being compared. Far from coming out of nowhere, this confrontation can be explained by historical dynamics inscribed deep within the AI project.

A long-standing comparison

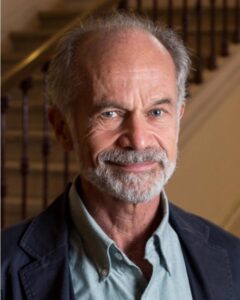

AI and HI, as fields of study, have co-evolved. There have been two distinct approaches since the early days of modern computing: evolution by parallelism or by disregard. “The founders of AI were divided into two approaches. On the one hand, those who wanted to analyse human mental processes and reproduce them on a computer, in a mirror image, so that the two undertakings would feed off each other. On the other, those who saw HI as a limitation rather than an inspiration. This trend was interested in problem solving, in other words in the result and not the process”, recalls Daniel Andler.

Our tendency to compare AI and HI in numerous publications is therefore not a current trend, but part of the history of AI. What is symptomatic of our times is the tendency to equate the entire digital world with AI: “Today, all computing is described as AI. You have to go back to the foundations of the discipline to understand that AI is a specific tool, defined by the calculation that is being made and the nature of the task it is solving. If the task seems to involve human skills, we will be looking at the capacity for intelligence. That, in essence, is what AI is all about”, explains Maxime Amblard.

Two branches of the same tree

The two major trends mentioned above have given rise to two major categories of AI:

- symbolic AI, based on logical inference rules, which has little to do with human cognition

- connectionist AI, based on neural networks, which is inspired by human cognition.

Maxime Amblard takes us back to the context of the time: “In the middle of the 20th century, the computing capacity of computers was tiny compared with today. So, we thought that to have intelligent systems, the calculation would have to contain expert information that we had previously encoded in the form of rules and symbols. At the same time, other researchers were more interested in how expertise could be generated. The question then became: how can we construct a probability distribution that will provide a good explanation of how the world works? It’s easy to see why these approaches exploded when the availability of data and memory and computing capacity increased radically”.

To illustrate the historical development of these two branches, Maxime Amblard uses the metaphor of two skis advancing one after the other: “Before computing power became available, probabilistic models were ignored in favour of symbolic models. We are currently experiencing a peak in connectionist AI thanks to its revolutionary results. Nevertheless, the problem of making the results comprehensible leaves the way open for hybrid systems (connectionist and symbolic) to put knowledge back into classic probabilistic approaches”.

For her part, Annabelle Blangero points out that today “there is some debate as to whether expert systems really correspond to AI, given that there is a tendency to describe systems that necessarily involve machine learning as AI”. Nevertheless, Daniel Andler mentions one of the leading figures in AI, Stuart Russell, who remains very attached to symbolic AI. Maxime Amblard also agrees: “Perhaps my vision is too influenced by the history and epistemology of AI, but I think that to describe something as intelligent, it is more important to ask how what is produced by the computation is able to change the world, rather than focusing on the nature of the tool used.”

Does the machine resemble us?

After the historical and definitional diversions, the following question arises: are AI and HI two sides of the same coin? Before we can come up with an answer, we need to look at the methodological framework that makes this comparison possible. For Daniel Andler, “functionalism is the framework par excellence within which the question of comparison arises, provided that we call ‘intelligence’ the combined result of cognitive functions”. However, something is almost certainly missing if we are to get as close as possible to human intelligence, situated in time and space. “Historically, it was John Haugeland who developed this idea of a missing ingredient in AI. We often think of consciousness, intentionality, autonomy, emotions or even the body”, Daniel Andler explains.

Consciousness and the associated mental states seem to be missing from AI. For Annabelle Blangero, this missing ingredient is simply a question of technical means: “I come from a school of thought in neuroscience where we consider that consciousness emerges from the constant evaluation of the environment and associated sensory-motor reactions. Based on this principle, reproducing human multimodality in a robot should bring out the same characteristics. Today, the architecture of connectionist systems reproduces fairly closely what happens in the human brain. What’s more, similar measures of activity are used in biological and artificial neural networks”.

Nevertheless, as Daniel Andler points out, “Today, there is no single theory to account for consciousness in humans. The question of its emergence is wide open and the subject of much debate in the scientific-philosophical community”. For Maxime Amblard, the fundamental difference lies in the desire to make sense. “Humans construct explanatory models for what they perceive. We are veritable meaning-making machines.”

The thorny question of intelligence

Despite this well-argued development, the question of bringing AI and HI together remains unanswered. In fact, the problem is primarily conceptual and concerns the way in which we define intelligence.

A classic definition would describe intelligence as the set of abilities that enable us to solve problems. In his recent book, Intelligence artificielle, intelligence humaine: la double énigme, Daniel Andler proposes an alternative, elegant definition: “animals (human or non-human) deploy the ability to adapt to situations. They learn to solve problems that concern them, in time and space. They couldn’t care less about solving general, decontextualised problems”.

This definition, which is open to debate, has the merit of placing intelligence in context and not making it an invariant concept. The mathematician and philosopher also reminds us of the nature of the concept of intelligence. “Intelligence is what we call a dense concept: it is both descriptive and objective, appreciative and subjective. Although in practice we can quickly come to a conclusion about a person’s intelligence in a given situation, in principle it’s always open to debate”.

Putting AI to work for humans

In the end, the issue of comparison seems irrelevant if we are looking for a concrete answer. It is of greater interest if we seek to understand the intellectual path we have travelled, the process. This reflection highlights some crucial questions: what do we want to give AI? To what end? What do we want for the future of our societies?

These are essential questions that revive the ethical, economic, legislative, and social challenges that need to be taken up by the players in the world of AI and by governments and citizens the world over. At the end of the day, there is no point in knowing whether AI is or will be like us. The only important question is what do we want to do with it and why?