Building responsible AI: ethics, sovereignty and the planet

- With the emergence of new technologies, ethical approaches to AI are now emerging.

- Ethical AI aims to integrate ethical recommendations into the AI code, whereas trustworthy AI seeks instead to provide a framework for automated decisions.

- Ethics by Design seeks to ensure the transparency and clarity of AI systems and their purposes; this must be complemented by Ethics by Evolution.

- Ethics by Evolution involves continuously adapting ethical criteria and indicators throughout the AI system’s learning period.

- One objective of Ethics by Evolution is to support tech professionals in developing human-centred technology.

Major technological advances have always sparked both hope and concern, as their potential abuses primarily reflect those of the societies that produce them. The digital revolution and the rise of artificial intelligence (AI), however, mark an unprecedented shift: for the first time, the future of humanity is being shaped by lines of code and by systems capable of acting on a large scale across all spheres of society, in both professional and personal life.

The breakneck pace of these innovations exceeds our collective capacity for anticipation and regulation, even affecting human cognition. Against this backdrop of profound civilisational uncertainty, it is becoming essential to structure and evaluate the ethics of AI systems, to steer these technologies towards serving the human interest.

Ethics by Design and Ethics by Evolution

Society does not yet have any truly established universal rules for embedding human values at the heart of AI systems. Although there are a growing number of initiatives, such as the European AI Act which came into force on 1st August 2024 and aims to ensure trustworthy AI, the rapid rise of digital technology makes it urgent to define a common ethical and sovereign framework. The aim is to guarantee the purpose, security, transparency, environmental responsibility and sovereignty of intelligent systems to strengthen trust in their use.

Consequently, designers of AI applications must incorporate the risks, limitations and societal impacts of their systems from the outset. Two complementary approaches can be distinguished: “Ethical AI”, which involves directly embedding principles, moral reasoning mechanisms and ethical recommendations into the code to enable the machine to make ethically sound decisions; and “Trustworthy AI”, which primarily aims to oversee and control automated decisions to ensure they comply with collective values.

The first approach appears to be more structural and evolutionary, as it allows ethics to be embedded at the very heart of the design and evolution of systems, following a logic of Ethics by Design 1 and then Ethics by Evolution 2.

The concepts of moral “values” and “principles” are complex and difficult to directly translate into computational structures. On the other hand, it is more practical to break them down into explicit standards and rules, that is to say, into tangible instructions and recommendations applicable in defined contexts – this is what might be called the process of decoding and then ethical encoding of AI. From this perspective, ethics must be integrated right from the design stage of systems, by anticipating potential dilemmas and translating shared principles into rules embedded in the code. Given AI’s decision-making autonomy and learning capabilities, this integration is essential to ensure the transparency, safety and accountability of digital technologies.

The challenge lies in developing algorithms capable of acting in contexts that have ethical implications. This ambition gives rise to two main categories of risk: those linked to design flaws and those arising from learning mechanisms. Giving direction to AI therefore involves articulating three complementary dimensions. First, orientation and purpose, which define the strategy and governance of systems. Second, meaning, which reminds us that AI must remain a tool at the service of society, fostering human-machine complementarity, as well as explainability and inclusion. And finally, explanation, which involves collective reflection on the objectives pursued and their legitimacy. Implementing such an ethical framework remains complex, however, and raises unavoidable questions.

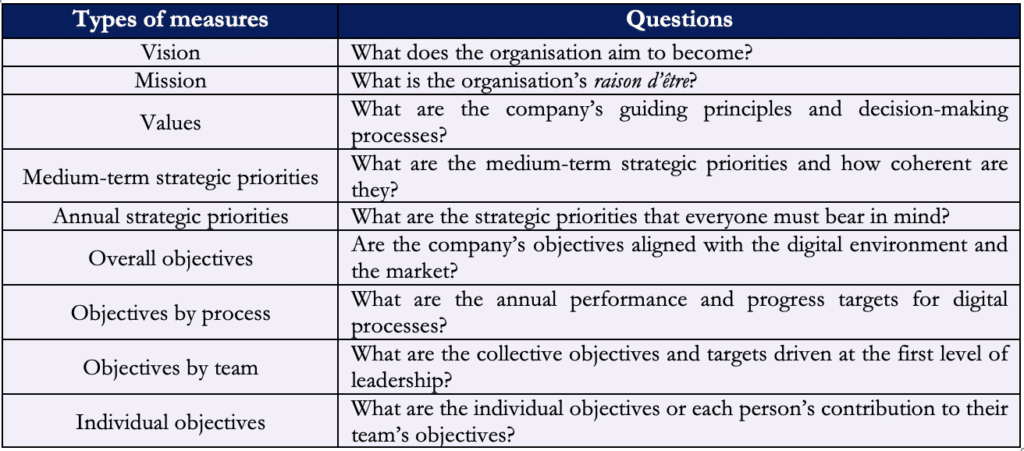

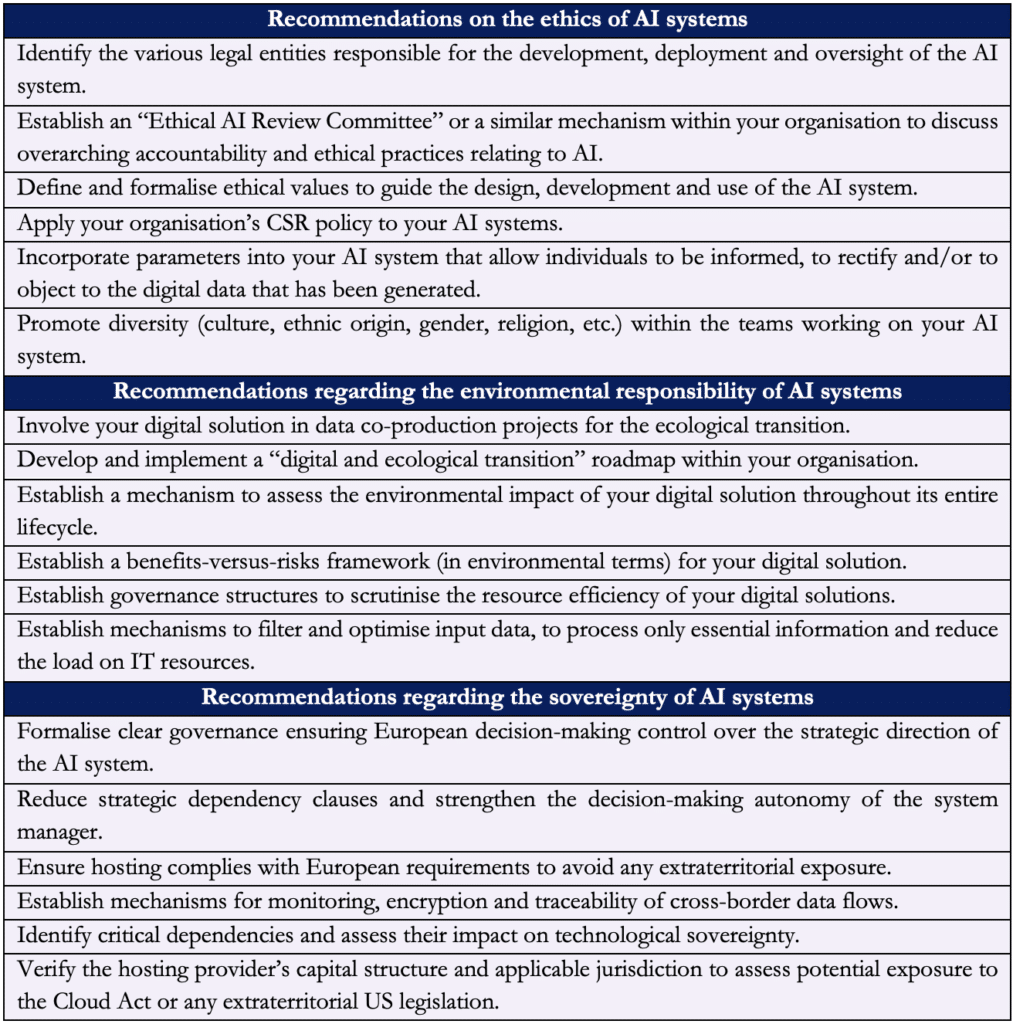

The whole point of ethical management lies in providing meaning and purpose to the organisation put in place (see Table 1).

Hence, it is critical to adopt an inclusive approach that involves all stakeholders from the outset of the design phase of AI systems, in order to ensure transparency, explainability and clarity regarding their purposes: this is the principle of Ethics by Design. This approach must then be extended throughout the entire AI lifecycle – deployment, use and evolution – by continually adapting ethical criteria and indicators in line with the system’s learning process: this is Ethics by Evolution.

Algorithmic ethics

Ethics as applied to digital technology lies in the intention directed towards the purpose and meaning of an algorithmic system. It can be divided into three types of ethics (see Table 2) 3:

- Descriptive ethics: this applies to intrinsic value (design) . It constitutes an ethics of application and allocation in the form of practice, involving the means, mechanisms, channels and procedures implemented;

- Normative ethics: this concerns management value (implementation). It forms an ethics of regulation with a deontological aspect via established standards, codes and rules;

- Reflective ethics: this applies to operational value (use). It represents an ethics of legitimisation based on questioning the foundations and purposes through human principles and values.

The articulation and arrangement of these three types of ethics apply to the entire life cycle of an algorithmic system (design – implementation – use) to inform our Ethics by Evolution.

A proactive approach to ethical inclusion, based on user involvement and ongoing interaction with AI systems, enables the gradual strengthening of trust, reliability and transparency in algorithms. Whilst algorithms are often accused of discrimination or bias, we must nevertheless question human responsibility – an algorithm remains a tool, shaped by the data it is fed, the design choices made and the ways in which we use it. The real risks lie in particular in biases (cognitive, statistical or economic0 which can be embedded, implicitly or explicitly, in models and render them unfair or malicious. This reality compels us to take a step back from our practices and question the direction we are taking: are we truly building a “digital humanity” that lives up to our moral and societal values?

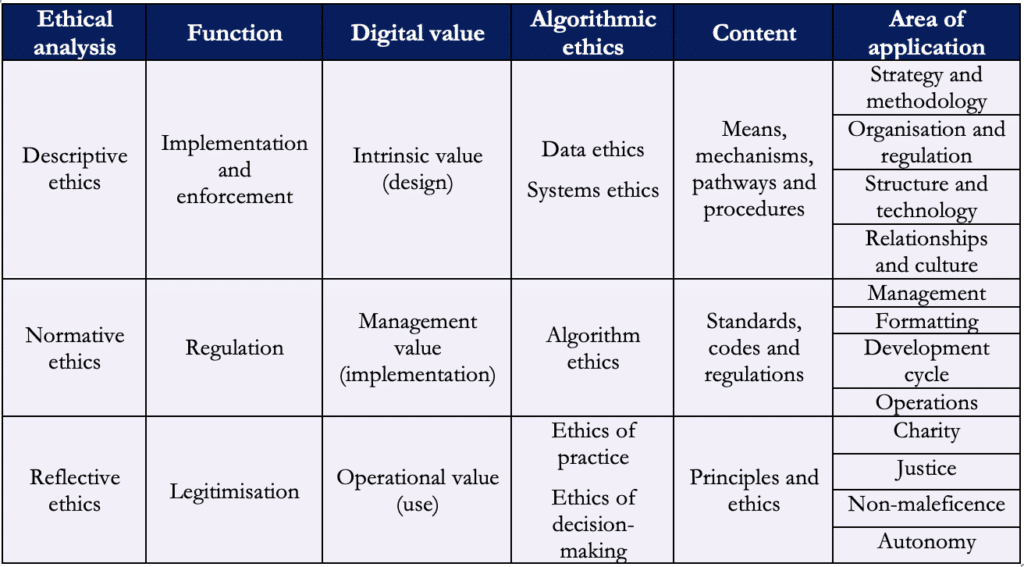

In these circumstances, it becomes essential to make specific recommendations regarding the ethics, environmental responsibility and sovereignty of AI systems. We can provide a few examples of measures to be implemented, as shown in Table 3 below.

Finally, it seems essential to guide tech professionals towards a responsible development approach, incorporating a form of emotional intelligence and a heightened awareness of the human impacts of their work. This is precisely the aim of an Ethics by Evolution approach, designed to put people back at the heart of technology by assessing every stage of an algorithm’s lifecycle and measuring its level of ethical commitment.

This approach is structured in several phases. The first focuses on contextual ethics: it examines the system’s objectives, the conditions of its design, the nature and representativeness of the data used, as well as raising teams’ awareness of the risks of bias and societal issues. The second phase consists of an experimental evaluation of the system, through the analysis of input and output data and the measurement of indicators such as reliability, interpretability, robustness and the absence of discrimination. Finally, an ethics of results ensures continuous monitoring of the algorithm in real-world use, to anticipate and adjust its behaviour.

Ultimately, whilst AI holds great promise, it also entails risks that must be managed to ensure its compliance with the law, moral values and the common good. Integrating ethical criteria today, despite the additional complexity they entail, is an essential prerequisite for establishing a genuine culture of digital ethics and ensuring security, meaning and trust in data processing within organisations and regions.