AI and productivity: rethinking workforce training and social cohesion

- AI has the potential to improve productivity without reducing workload: the actual gains are reinvested in increased activity rather than in freed-up time.

- The same technology can either enhance human capabilities or replace them, depending on how it is deployed.

- Young graduates are paying a heavy price as junior roles are the first to disappear, severing the traditional chain of transmission of professional expertise.

- A Marshall Plan for training is urgently needed. Curriculum reform, mass retraining and investment in human capital on a par with AI infrastructure.

- Like the “Engels pause”, AI could concentrate its benefits among the few — unless we invent new forms of solidarity and deliberately direct progress towards workers.

Rarely has a technology simultaneously sparked so much enthusiasm and so much anxiety at the same time. On the one hand, promise of productivity gains unseen since the 1970s; on the other, the spectre of dwindling job prospects for an entire generation of young graduates. But these two realities are not contradictory. Together, they contribute to a fundamental contemporary equation: AI is an amplifier—of value for those who prepare for it, of inequality for those left behind. To fully grasp this challenge, we must follow the thread that runs from productivity to employment, from employment to business organisation, and then from organisation to a collective ambition for training that matches the scale of the challenge – the journey this article sets out to take.

Productivity: a promise beginning to materialise

Let’s start with some good news: signs of a real productivity surge are beginning to mount. In January 2026, economists at Apollo Global Management, led by Torsten Slok, identified tangible increases in DevOps, process automation and support functions1. The most comprehensive summary available to date is the Early Signals of AI Impact dashboard, which aggregates 303 sources in real time — academic studies, sector reports, field data — across 17 indicators of AI-driven labour transformation: individual productivity, recruitment trends, shifts in required skills, team restructuring. Its consensus assessment is clear: the adoption of AI tools is accelerating across all industries, productivity measured in pioneering companies is rising, and roles involving repetitive tasks are being reduced. This crucial nuance can be summed up in a single word: jobs are changing faster than they are disappearing, and no aggregate macroeconomic shift is yet measurable. It is precisely this ‘yet’ that lends the issue of preparedness its sense of urgency2. But AI does more than just replace tasks: it transforms the very pace of innovation. Bontadini, Haskel and their co-authors describe it as a ‘meta-innovation’ — an innovation in the method of innovation — with multiplier effects on total factor productivity that the US economy is only just beginning to feel, whilst Europe is lagging behind in adoption to a worrying extent3.

AI does more than just replace tasks: it transforms the very pace of innovation

Nevertheless, two caveats are in order. The first relates to Moravec’s paradox: AI excels at cognitively complex tasks — writing, summarising, coding — but remains clumsy at the contextual manual tasks that seem easy to us. This discrepancy partly explains the gap between the touted potential and actual adoption in the economy4. The second caveat is even more counter-intuitive: according to a Harvard Business Review study published in February 2026, AI does not lighten the workload — it intensifies it. Employees who adopt AI tools handle more cases, respond to more requests and take on new tasks. The productivity gain is real, but it does not automatically translate into freed-up time: it is reinvested in additional work5.

Another key finding from the research by the NBER and MIT is that AI can boost workers’ productivity without necessarily automating entire tasks. This distinction between augmentation and automation is not merely semantic — it determines the full range of the technology’s social impacts. In augmentation mode, AI becomes a cognitive prosthesis: it extends the individual’s capabilities, enabling them to handle greater complexity, produce faster, and better document their decisions. Jobs are preserved and often enhanced. In automation mode, it replaces human activity itself: jobs may disappear or undergo radical transformation. Both modes coexist in most organisations — sometimes within the same role, depending on the tasks and the circumstances6.

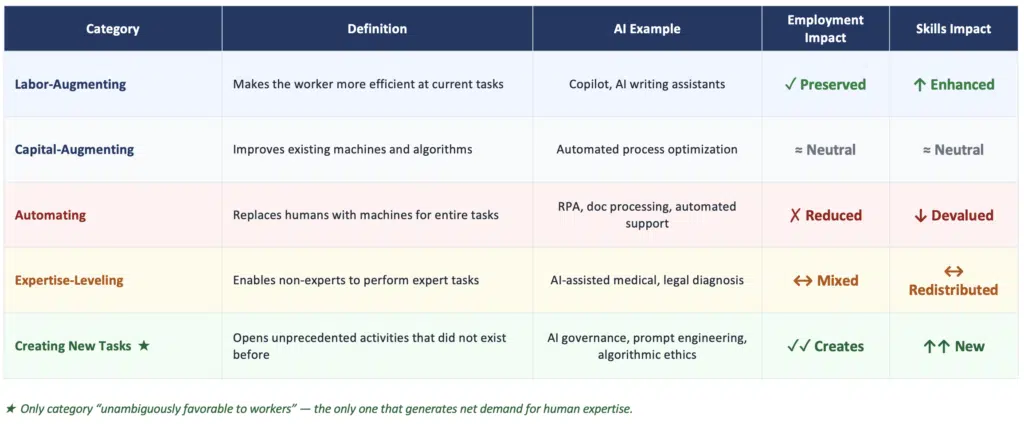

Acemoglu, Autor and Johnson refine this framework further by distinguishing five categories of technology, two of which deserve particular attention. ‘Expertise-levelling’ technologies enable non-experts to perform tasks previously reserved for specialists — medical AI for diagnostic support is one example. ‘New task creation’ technologies, on the other hand, are the only ones that are ‘unambiguously favourable to workers’: they generate a net demand for human expertise by opening up activities that did not previously exist. It is in this latter category that the most solid promise of AI lies — though it is also the most demanding in terms of training7.

The impact on jobs: who are the canaries in the coal mine?

The metaphor chosen by Erik Brynjolfsson for his summer 2025 study is striking: in coal mines, canaries were sent in to detect toxic gases before the miners entered. Young workers in sectors exposed to AI are, today, those canaries8.

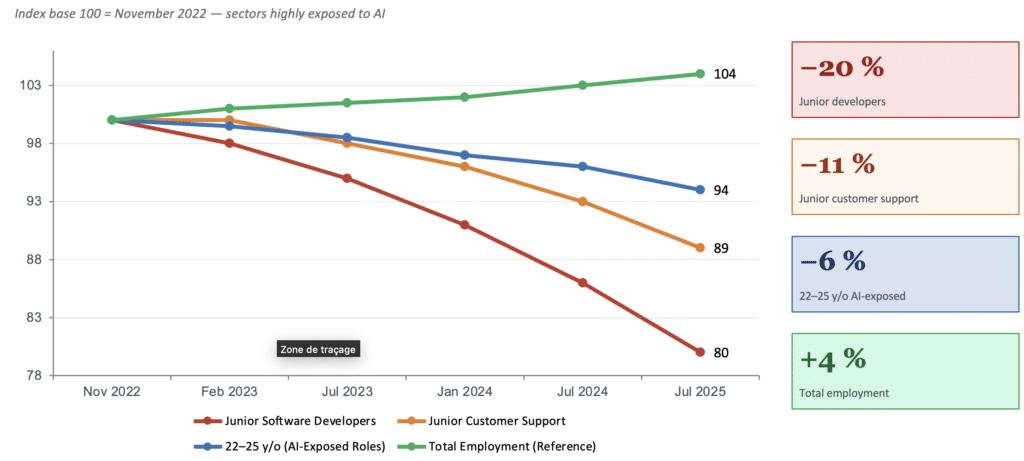

The ADP data — the most granular ever used for this type of analysis — speaks for itself: in the United States, between late 2022 and July 2025, employment among 22- to 25-year-olds in roles highly exposed to AI fell by 6%. For young software developers, the decline reached 20% compared to the peak in November 2022. Entry-level customer support roles also lost nearly 11% of their junior staff. It is the entry-level positions that are disappearing first9.

This assessment echoes the findings of the Financial Times’ investigation into the ‘great graduate jobs drought’: entire cohorts are graduating from top universities and struggling to find roles commensurate with their qualifications, not because AI has replaced them, but because it has rendered the foundational learning experience provided by junior roles redundant10. The consequence is perverse and deserves our attention: if young people no longer acquire their expertise on the job in these first roles, the chain of transmission of professional expertise is broken. AI can amplify expertise — but it cannot create it from scratch. If the generation entering the labour market today does not build its foundations, who will train the experts of 2035?

However, we must not confuse exposure with net destruction — and indeed question the causality. The author himself points out that the slowdown in hiring in jobs exposed to AI began in spring 2022, i.e. before the release of ChatGPT in November 2022; the Fed’s rise in key interest rates is at least as plausible an explanation11. Even more surprising, Yale’s Budget Lab observes no significant difference between employment levels in the jobs most exposed to AI and those least exposed — “the lines look the same”, in the words of its chair. What the exposure metrics do capture with certainty is a decline in job vacancies in the 40% of occupations most exposed since the rise of ChatGPT — which is not equivalent to net job losses. The economy as a whole continues to create jobs; it is their structure that is changing, and with it the nature of the skills required12.

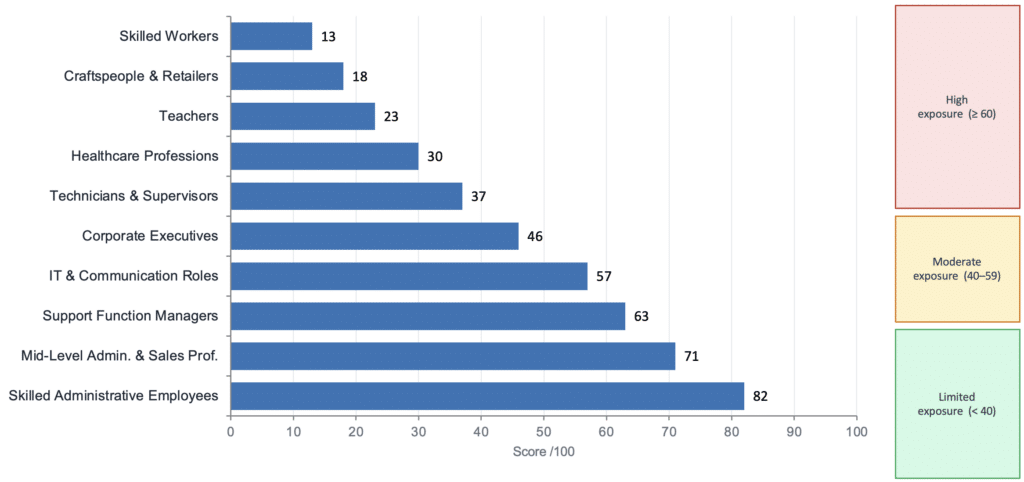

In France, the picture is similar. Projections for 2026–2030 identify several socio-professional categories that are particularly vulnerable: intermediate administrative professions, certain support function managers, and skilled office workers. France is not immune due to its social model — it is exposed, like its partners, to an accelerated restructuring of its labour market13.

David Autor offers a more nuanced and, ultimately, more optimistic perspective: AI could restore value to skilled labour by amplifying what humans do best — judgement, relationships and contextual creativity. It could even be the catalyst for a revival of skilled middle-class jobs, provided that the technology is geared towards augmenting human capabilities rather than simply replacing them. But this prospect requires active policy measures. It will not materialise spontaneously14.

Businesses and AI: between hesitant adoption and profound transformation

While the macroeconomic effects remain to be consolidated, the effects of AI on the internal organisation of businesses are already noticeable. The first lesson from the most rigorous empirical studies is that results depend far less on the technology itself than on the organisational choices that accompany it. Two companies equipped with the same tools can achieve radically different results depending on whether they have redesigned their processes, trained their teams and redefined roles — or not15.

This tension between automation and augmentation lies at the heart of the Beyond the Binary report: the two dynamics coexist and combine. The same technology can automate routine tasks whilst enhancing the capacity to focus on high-value-added tasks. Empirically, three adoption profiles have been identified: ‘cyborgs’, who seamlessly integrate AI into their daily workflow; ‘centaurs’, who alternate between working autonomously, and delegating to AI depending on the nature of the tasks; and ‘self-automators’, who programme AI to take over entire portions of their work. These three modes do not have the same implications for the skills required the risks of obsolescence or training needs. The question is therefore not “does AI replace?” but “how is it deployed, by whom, for what purposes, and with what safeguards?”16

Companies which have successfully deployed AI that enhances their employees’ skills have done so deliberately, refusing to let the logic of automation alone dictate architectural choices

One category under particular scrutiny is that of middle managers. AI brings to light hierarchical layers whose added value—information sharing, coordination, reporting—is precisely what automated systems can now handle17. But the managerial role is not limited to the flow of information: it includes talent development, conflict management, contextual interpretation, and trust in teams—skills that the McKinsey Global Institute identifies as the big winners of the AI era, for which demand is growing precisely because LLMs cannot replicate them. The manager of tomorrow is not the one who filters information — it is the one who creates meaning18.

Finally — and this is perhaps the most important lesson — AI that benefits workers is not built by default. Research from MIT Sloan shows that companies which have successfully deployed AI that enhances their employees’ skills have done so deliberately, refusing to let the logic of automation alone dictate architectural choices. This is not an abstract moral imperative: it is a prerequisite for sustainable performance, in a context where talent retention and internal trust are becoming decisive competitive advantages19. Acemoglu, Autor and Johnson go further: it is not merely a matter of corporate choice, but a documented market failure. Three cumulative factors are structurally driving the industry towards automation: path dependence (large firms have built their business models on automation tools from which they derive their profitability); the dominant ideology in research laboratories, which is oriented towards AGI and largely insensitive to labour effects; and the growing concentration of the sector, which stifles alternative models. “We are not currently on the pro-worker AI path,” states Simon Johnson. This failure calls for responses that go beyond the goodwill of companies20.

For a Marshall Plan for training

If AI is reducing entry-level roles, it is undermining the main mechanism through which companies have always passed on their human capital: on-the-job learning. All types of human-AI interaction — from ‘cyborgs’ to ‘centaurs’ and ‘self-automators’ — require prior expertise that AI serves to amplify. But this expertise is built up in the early years of a professional career, in junior roles which are precisely the ones that are disappearing. The systemic risk is real: by short-circuiting early-career learning, AI risks creating a generation of professionals without solid foundations — buildings without a ground floor21.

This assessment calls for a response on the scale of the Marshall Plan. The analogy is not merely rhetorical: the 1948 plan was a collective and structured response to the massive destruction of physical capital. AI is causing the accelerated destruction of human capital — not through violence, but through obsolescence. The Oxford research on technological unemployment serves as a useful reminder that every major wave of automation has brought about unexpected adaptations; yet no previous wave has had this pace or this cross-cutting impact across the entire spectrum of cognitive functions, from the bottom of the ladder to the most highly skilled professions22.

Skills have an increasingly short lifespan and continuing professional development systems were designed for a world where obsolescence was measured in decades, not years

Three areas of work are required in parallel. The first is curriculum reform: from primary education to the grandes écoles, we must integrate not only skills for interacting with AI, but above all the abilities that LLMs cannot replicate — critical judgement, reasoning, contextual creativity, and relational intelligence. Research on K‑12 skills in the AI era shows that this reform is feasible on a large scale if we commit the necessary resources to prioritising it23.

The second priority is a massive retraining programme, targeting the 40% of occupations most at risk. Granular data from Yale’s Budget Lab enables these interventions to be prioritised with unprecedented precision. The challenge is to develop short, certified pathways accessible to those currently in employment, with tripartite governance involving the state, professional bodies and businesses. Speed is crucial here: skills have an increasingly short lifespan and continuing professional development systems were designed for a world where obsolescence was measured in decades, not years.

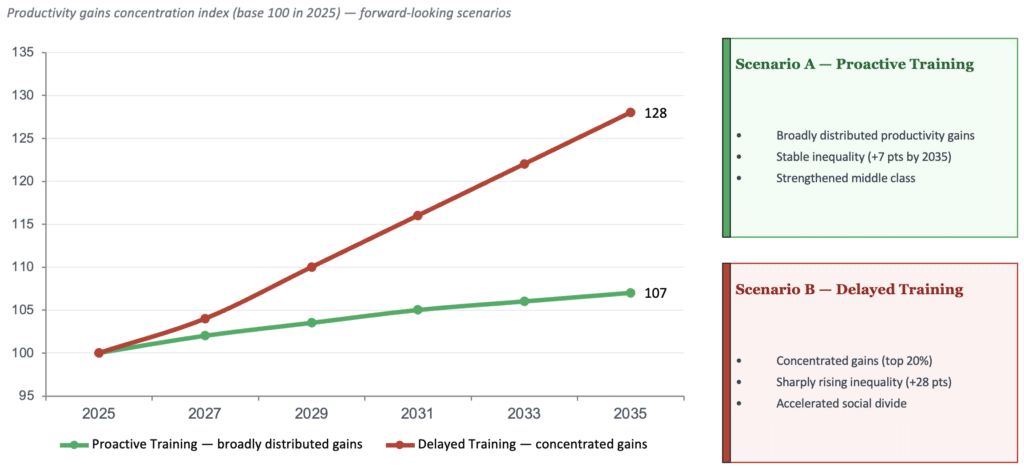

The third area of focus is financial. Companies are investing hundreds of billions in AI infrastructure — data centres, chips, foundational models. A comparable investment in human capital is not merely a moral obligation: it is the prerequisite for the model’s social sustainability. The economic scenarios for transformative AI developed in February 2026 in London, as part of a forward-looking exercise bringing together economists and policymakers around the Windfall Trust and Gresham College, illustrate this precisely: in all trajectories where productivity gains are widely distributed, investment in training has consistently preceded — or accompanied — technological deployment. The opposite is equally true: where training has been deferred, the benefits of AI have been concentrated among a few players, widening inequalities rather than reducing them24.

These three areas of focus — curricula, reskilling, and funding — are necessary. But they are not enough unless the root cause is addressed: the direction of AI itself. Acemoglu, Autor and Johnson identify three complementary policy levers. The first is the application of competition law to break up concentration in the sector and open the market to new entrants with business models less focused on pure automation. The second is the legal protection of professional expertise: workers whose know-how is absorbed by AI systems should have rights over this contribution, as a safeguard against ‘theft of expertise’25. The third is the institutionalisation of workers’ voices in deployment decisions — at both company level and in sectoral regulation. Training humans to adapt to AI is essential; orienting AI towards humans is just as urgent26.

In the face of social upheaval, creating new forms of solidarity

This training plan is therefore essential for those whose skills are threatened by artificial intelligence. However, history teaches us that industrial revolutions disrupt traditional social frameworks. When they are massive, productivity gains are not merely a statistical fact; they herald a profound structural transformation.

In the 19th century, the harnessing of mechanical and thermal power brutally drove peasants from their fields and displaced artisans in favour of factories operated by low-skilled workers. This process created an urban proletariat plunged into poverty by the glut of labour supply. Despite a spectacular rise in GDP, workers’ living conditions stagnated for nearly half a century. This is the ‘Engels’ pause’27, the period during which the industrial elite captured the bulk of the wealth generated by productivity gains. This upheaval was also characterised by a devastating loss of social bearings: forced displacement, the breakdown of family solidarity, the erosion of traditional workplace hierarchies, and subsistence wages. The economy was thus ‘disembedded’ from society, to use Polanyi’s term28. The intellectual and political response was vigorous: from Karl Marx’s Capital (1867) to the formation of revolutionary and reformist movements, a balance of power developed between capital and labour, gradually leading to modern social security, without the ‘great revolution’ that had been predicted.

This historical overview is, of course, simplified. The slow construction of the social contract was neither natural nor peaceful. It took place under the brutal pressure of political and geopolitical upheavals: colonial expansion, the clash of imperialisms, the Soviet revolution and the world wars. The social contract also long relied on the emergence of alternative jobs in the service sector, capable of absorbing the workforce rendered redundant in agriculture and industry. Douglass North emphasises that the survival of such a system depends on the evolution of its institutions to manage these transitions29.

AI could bring about changes comparable to those of previous industrial revolutions

Because it is cognitive, the AI revolution will spread more rapidly than that of the engine — which makes intervention all the more urgent: a 60-year ‘Engels pause’ would be unacceptable in the 21st century, even if shortened. In its physical applications, AI relies on existing robot architectures or those that can be reprogrammed within a relatively short timeframe. Like electricity, AI is a cross-cutting technology capable of massively increasing productivity across all sectors. It is all a question of the pace of deployment and real-world impact, but the risk exists and must be analysed and anticipated. AI could bring about changes comparable to those of previous industrial revolutions. It is more a question of time, particularly in a Europe that has underinvested in technology and R&D for over 10 years. Furthermore, for the past 20 years, the internet revolution has concentrated profits among a small number of players capable of investing heavily in industrial and human capital. This accumulation of capital enables American Big Tech to position itself at the forefront of AI, by acquiring rival start-ups, recruiting the best talent and securing the electrical resources needed for deployment. These strategies are reminiscent of those of the oil oligopolies of the second industrial revolution, which were ultimately dismantled at the start of the 20th century.

Faced with the risk of being left behind, we struggle to envisage future sectors to fall back on. Although an ageing population, education, leisure and healthcare are powerful drivers of employment, they are currently poorly paid and unattractive. At the same time, technology is reigniting geopolitical tensions: it is, for example, one of the root causes of Sino-American friction. We must therefore prepare for a dual revolution — the internal impact of technology on our production systems and the external effects of a new distribution of wealth. As Acemoglu and Johnson point out30, following on from Korinek and Stiglitz31, adjustment is unlikely to occur spontaneously and requires us to ‘steer’ technical progress32. This position is contested by those who fear the risks of regulatory capture; nevertheless, it raises an interesting question that we probably need to anticipate.

It is therefore prudent to work on innovative paradigms, particularly in terms of institutions and financing. The options frequently proposed all represent a break with existing systems: universal basic income, free access to public services, and a deliberate reduction in productivity through shorter working hours. It is too early to take a definitive stance, but these programmes still need to be financed. The issue appears straightforward, since rising productivity increases the pool of wealth to be distributed. There is talk of taxes on robots33 and productive capital, or even on data, since taxing labour alone will be insufficient34. Or the creation of a very large social protection fund, managed like a pension fund and funded by contributions from new companies, in return for the provision of the social infrastructure to which they owe their success — modelled on the Alaska Permanent Fund, which redistributes a fraction of the state’s oil revenues to citizens, or the academic proposals for a ‘citizen fund’ developed by John Roemer and taken up by Daron Acemoglu. In the same vein, David Autor and Neil Thompson (MIT) propose a ‘Universal Basic Capital’: every child would receive a portfolio of productive assets at birth, granting them a permanent right to capital income — including income from automation — without relying on politically vulnerable budgetary transfers. Further ideas are needed, as the sums mentioned here do not measure up to the challenges at stake. Moreover, these proposals require a global consensus due to the mobility of capital and entrepreneurs. This debate must take place before undesirable solutions are imposed on us by developments in the labour market where we would be ‘acted upon’ rather than ‘acting’.

The economist David Autor puts it this way: AI could rebuild the middle class by amplifying what humans do best. Brynjolfsson reminds us, with data to back it up, that for the time being, it is the youngest who are paying the price of the transition. Between these two truths, there is no contradiction — there is an agenda.

AI is an amplifier. It amplifies the productivity of organisations that prepare for it; it amplifies inequalities in those that do not. It can amplify the value of human judgement; it can also amplify the vulnerability of those who lack access to training. The real question is not a technological one. It is a political one: do we have the collective will to make training as urgent a priority as investment in digital infrastructure? And to find creative and realistic solutions to fund the transformation of the system. The answer to this question will determine whether the AI dividend benefits a few — or everyone.